MSc thesis project proposal

Efficient Spiking Neural Network Architectures with Spatio-Temporal Pruning

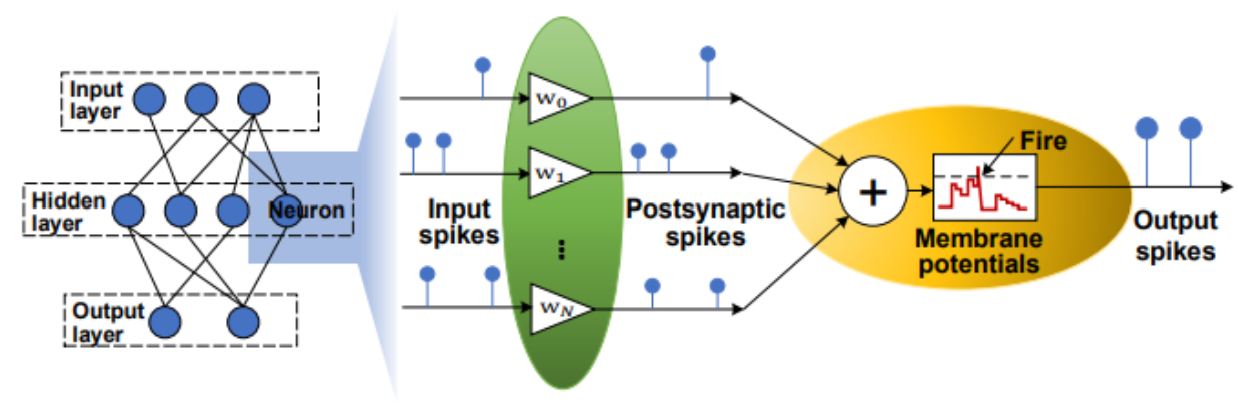

Spiking Neural Networks are a promising alternative to traditional deep neural networks since they perform event-driven information processing like the human brain. However, a drawback of SNN is its high inference latency due to the long timesteps required. If the latency is too high, it also impacts energy efficiency negatively. If pruning can be incorporated into them, the energy benefits of SNNs can be largely revealed.

Background Materials:

Rueckauer B, Lungu I A, Hu Y, et al. Conversion of continuous-valued deep networks to efficient event-driven networks for image classification[J]. Frontiers in neuroscience, 2017, 11: 682.

Kheradpisheh S R, Ganjtabesh M, Thorpe S J, et al. STDP-based spiking deep convolutional neural networks for object recognition[J]. Neural Networks, 2018, 99: 56-67.

Han B, Srinivasan G, Roy K. Rmp-snn: Residual membrane potential neuron for enabling deeper high-accuracy and low-latency spiking neural network, in Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 2020: 13558-13567.

Chowdhury S S, Garg I, Roy K. Spatio-temporal pruning and quantization for low-latency spiking neural networks[C]//2021 International Joint Conference on Neural Networks (IJCNN). IEEE, 2021: 1-9.

Assignment

You need to do a survey of previous SNN training or conversion algorithms [1 - 3] and pruning methods [4 - 5] to have a comprehensive understanding.

Design a lightweight SNN with competitive accuracy and low latency, using spatially and temporally pruning methods.

Implement lightweight SNN in ASIC and compare its power consumption and classification rate vs. the prior art.

Requirements

Experience with Python and PyTorch/TensorFlow.

Experience with Verilog (preferred) or VHDL.

Knowledge of the ASIC design flow will be a plus.

Contact

dr. Chang Gao

Electronic Circuits and Architectures Group

Department of Microelectronics

Last modified: 2022-10-10