MSc thesis project proposal

[2023] Efficient Transformer for Keyword Spotting

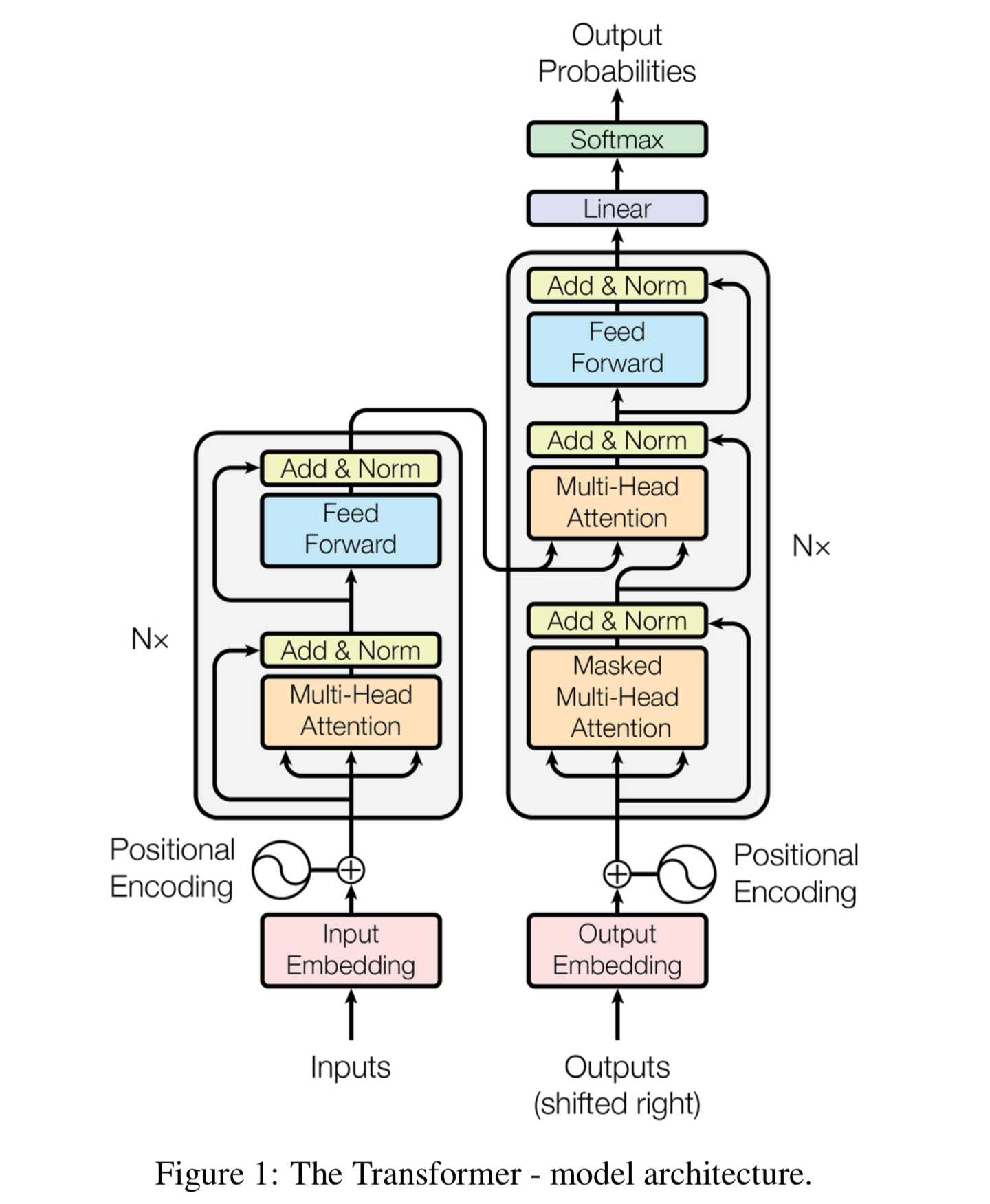

Always-on keyword spotting on ultra-low-power integrated circuits is a hot topic in chip design for artificial intelligence systems. Previous work used spiking neural networks (SNNs), Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs), but the state-of-the-art accuracy was recently achieved by transformers. However, transformers have a large memory footprint and many arithmetic operations; thus, it is difficult to be employed on tiny embedded systems with scarce resources. In this project, you will work on developing algorithmic methods to compress the model of transformer neural networks to reduce its memory and arithmetic cost.

Background Materials:

- Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Łukasz Kaiser, and Illia Polosukhin. 2017. Attention is all you need. In Proceedings of the 31st International Conference on Neural Information Processing Systems (NIPS'17). Curran Associates Inc., Red Hook, NY, USA, 6000–6010.

- Zuzana Jelčicová and Marian Verhelst. 2022. Delta Keyword Transformer: Bringing Transformers to the Edge through Dynamically Pruned Multi-Head Self-Attention. TinyML`22

Assignment

1. Literature review of previous efforts in model compression of transformers

2. Innovate new model compression methods for transformers with considerations on hardware constraints of tiny embedded systems, such as an ARM Cortex-M1 microcontroller.

Requirements

1. Basic knowledge/experience of deep neural networks.

2. Python & PyTorch coding.

3. (Optional) Knowledge of digital circuit design and microcontrollers.

Contact

dr. Chang Gao

Electronic Circuits and Architectures Group

Department of Microelectronics

Last modified: 2023-11-12